Client details have been anonymised at their request. Industry, findings, and outcomes are real.

The Challenge

A UK challenger bank with over 500,000 customers was preparing for an FCA review of their Consumer Duty compliance. Their internal accessibility audit showed WCAG 2.1 AA compliance across their core digital products. They believed they were compliant.

But their customer complaints data told a different story. A disproportionate number of account opening abandonments and support calls came from customers who self-identified as having ADHD or dyslexia. The bank could not explain the gap between their compliance status and their customer outcomes.

The compliance paradox: This bank passed every automated WCAG scan. Their internal audit flagged nothing critical. Yet real neurodivergent customers were abandoning their account opening journey at double the rate of neurotypical users. Automated testing catches roughly 30% of barriers. The other 70% require real human testers.

What Neurodivergent Testing Revealed

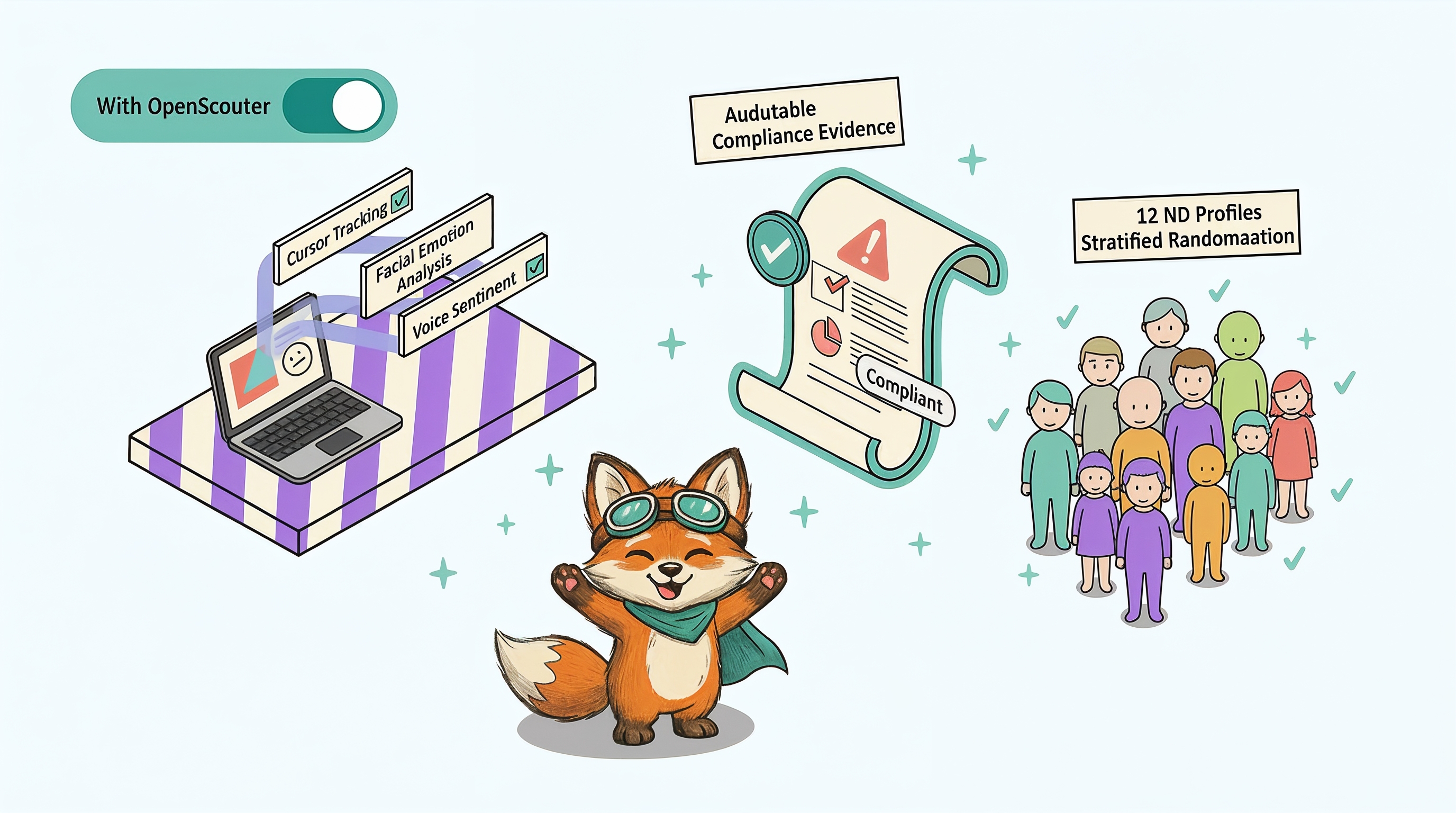

OpenScouter ran structured tests with 12 neurodivergent testers (4 ADHD, 4 dyslexic, 2 autistic, 2 with dyscalculia) across three critical journeys: account opening, first payment, and dispute resolution.

The results were significant. Despite passing automated WCAG scans, the testing identified 47 accessibility barriers that affected neurodivergent users. These fell into four categories:

| Barrier Category | Issues Found | Severity | Primary Condition Affected |

|---|---|---|---|

| Cognitive Overload | 18 | Critical / Serious | ADHD, Dyslexia, Autism |

| Unclear Error Handling | 12 | Serious / Moderate | ADHD, Dyscalculia |

| Timing Pressure | 9 | Critical / Serious | All conditions |

| Inconsistent Navigation | 8 | Serious / Moderate | Autism, ADHD |

| Total | 47 |

Cognitive Overload (18 issues)

- The account opening form had 23 fields across 7 steps with no progress indicator. ADHD testers lost track of where they were and abandoned at step 4 or 5.

- Terms and conditions were presented as a 4,200-word block of text. Dyslexic testers could not parse the content and either skipped it entirely or abandoned the process.

- Multiple competing calls to action on the dashboard created decision paralysis for autistic testers.

Unclear Error Handling (12 issues)

- Error messages used technical language ("validation failed on field reference") that did not explain what the user needed to fix.

- Errors appeared at the top of the page, but the problematic field was scrolled out of view. Testers could not connect the error message to the field.

- Sort code validation rejected valid formats (with spaces, with dashes) without explaining the expected format.

Timing Pressure (9 issues)

- Session timeouts during ID verification gave no warning. Three testers were logged out mid-task and lost their progress.

- A 60-second countdown on the 2FA code entry caused anxiety for testers who needed more time to switch between apps.

- Auto-advancing carousels on the dashboard moved too quickly for testers with slower processing speed.

Inconsistent Navigation (8 issues)

- The "back" button in the account opening flow did not return to the previous step. It returned to the marketing page, destroying all progress.

- Navigation labels changed between the app and the web portal for the same features ("Transfers" vs "Send Money" vs "Payments").

- The hamburger menu opened from the right on some pages and the left on others.

What the Bank Did

The bank prioritised fixes by user impact, not WCAG severity. Over 8 weeks, their engineering team addressed the findings in three sprints:

-

Sprint 1 (Week 1-3): Error Handling and Orientation

Fixed the 12 error handling issues. Added inline error messages pointing to the specific field. Added a progress indicator to the account opening flow showing step X of Y. Added session timeout warnings with one-click extension options. -

Sprint 2 (Week 4-6): Form Simplification and Language

Simplified the account opening form from 23 fields to 14 by deferring non-essential fields to post-onboarding. Broke terms and conditions into digestible sections with plain-language summaries. Added contextual help tooltips for banking jargon. -

Sprint 3 (Week 7-8): Navigation Consistency

Standardised navigation labels across all platforms. Fixed the back button behaviour to return to the previous step, not the homepage. Removed auto-advancing carousels and replaced with user-controlled tabs.

The Results

After remediation, OpenScouter ran a second round of testing with the same tester profiles:

The Compliance Outcome

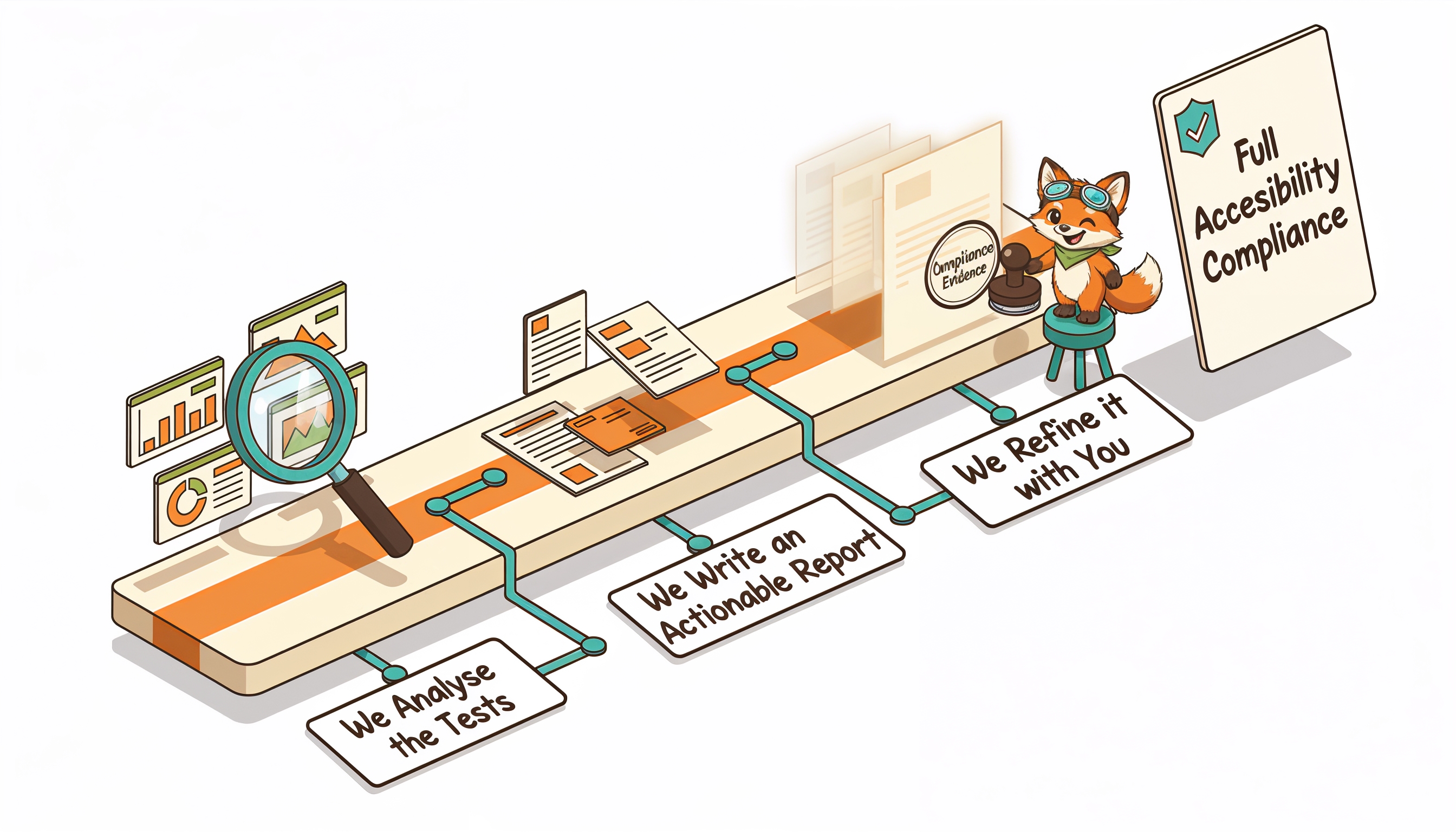

When the FCA review arrived, the bank presented both rounds of OpenScouter reports as evidence of their Consumer Duty compliance approach. The reports demonstrated:

- They had tested with real users from their target market, including vulnerable customers

- They had identified and prioritised accessibility barriers by user impact

- They had measurable improvement in outcomes for neurodivergent users

- They had an ongoing testing plan to maintain compliance

The FCA review concluded without enforcement action.

Key Takeaways

- Passing automated WCAG scans is not enough. This bank was technically compliant but still creating significant barriers for neurodivergent customers.

- Test with real users, not just expert reviewers. The 47 issues found by neurodivergent testers were invisible to the automated scan and the internal expert audit.

- Prioritise by user impact. Fixing error handling and form complexity had more impact than fixing colour contrast ratios.

- Before-and-after testing proves improvement. Regulators want evidence of outcomes, not just intent.

The FCA's position is clear: Consumer Duty compliance requires you to demonstrate outcomes for customers with characteristics of vulnerability, including neurodivergent conditions. A passing WCAG score is not sufficient. You need user testing evidence, before-and-after metrics, and a documented remediation process.

Facing a compliance review? Start with a free accessibility audit to understand where your products stand before the regulator asks.