Why Compare OpenScouter and UserTesting?

UserTesting is the largest general-purpose user research platform in the world. It is excellent at what it does: rapid unmoderated usability testing with a broad panel of participants. But if your primary goal is accessibility compliance and understanding how neurodivergent users experience your product, the two platforms serve fundamentally different purposes.

This comparison helps you decide which platform fits your needs, or whether you need both.

The Core Difference: General Usability vs Accessibility-First

UserTesting recruits from a general population panel. You can filter by demographics, but the platform was not built for accessibility testing. Testers are not screened for disabilities or neurodivergent conditions, sessions do not capture the behavioural signals that reveal accessibility barriers, and reports do not map findings to WCAG or regulatory frameworks.

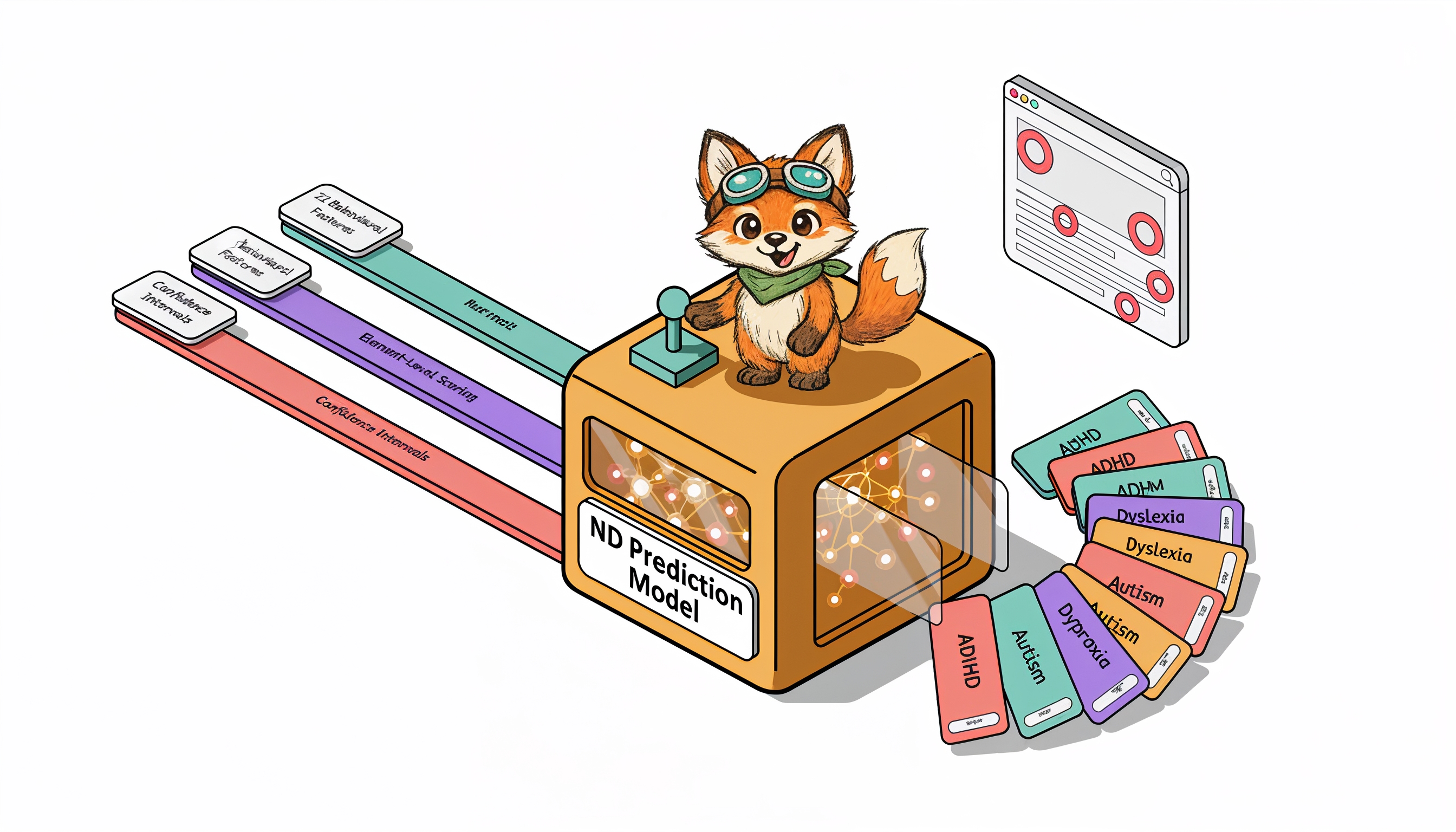

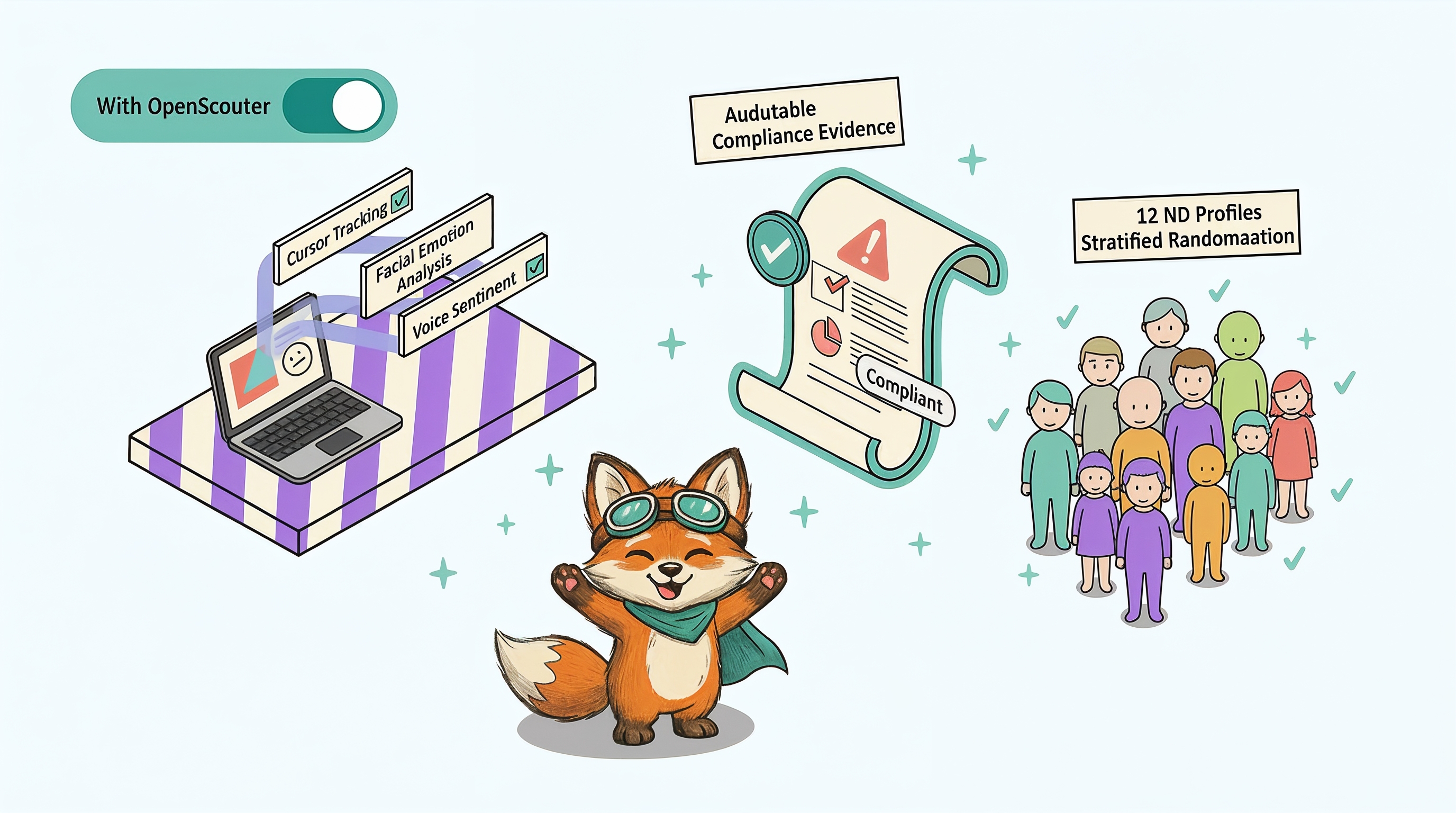

OpenScouter was built from the ground up for neurodivergent accessibility testing. Every tester is neurodivergent (ADHD, dyslexia, autism, dyspraxia, and other conditions). Sessions capture three data streams simultaneously: behavioural events, facial expressions, and voice feedback. Reports map every finding to WCAG 2.2 criteria and regulatory requirements like the FCA Consumer Duty.

Feature-by-Feature Comparison

| Feature | OpenScouter | UserTesting |

|---|---|---|

| Tester panel | Curated neurodivergent testers (ADHD, dyslexia, autism, dyspraxia) | 3.3M+ general participants; limited disability screening |

| Data capture | 3 streams: browser events, facial expressions, voice transcripts | Screen recording + think-aloud audio only |

| WCAG mapping | Every finding mapped to WCAG 2.2 success criteria | No WCAG mapping; manual interpretation required |

| Regulatory evidence | Reports structured for FCA Consumer Duty, EAA, Equality Act | Not designed for compliance evidence |

| Report format | AI-generated compliance report with severity scoring | Video highlights and researcher notes |

| Cross-tester analysis | AI synthesis across all neurodivergent profiles | Manual analysis required |

| Pricing model | Per-test; no annual commitment required | Enterprise annual contracts ($20k+/year) |

| Turnaround | Days | Hours to days (but no compliance output) |

| Industry focus | Financial services, insurance, healthcare, government | General (consumer, enterprise, SaaS) |

| Facial expression analysis | Yes — confusion, frustration, anxiety detected in real time | No |

Tester Panel

UserTesting: 3.3 million+ general participants worldwide. Can filter by basic demographics (age, gender, location, income). Limited ability to recruit disabled or neurodivergent participants specifically.

OpenScouter: Curated panel of neurodivergent testers with verified conditions. Every tester brings lived experience of navigating digital products with ADHD, dyslexia, autism, or other neurodivergent conditions. Testers are trained in structured accessibility evaluation.

Data Capture

UserTesting: Screen recording and think-aloud audio. No behavioural event tracking, no facial expression analysis, no structured accessibility annotation.

OpenScouter: Three simultaneous data streams. Browser events (clicks, scrolls, navigation patterns, hesitations, rage clicks), facial expression analysis (confusion, frustration, anxiety signals via webcam), and voice transcripts with sentiment analysis. All correlated on a single timeline.

Reporting

UserTesting: Video highlight reels and researcher notes. Useful for stakeholder buy-in but not structured for compliance evidence. No WCAG mapping, no severity scoring against accessibility standards.

OpenScouter: AI-generated compliance reports that map every finding to WCAG 2.2 success criteria. Severity scores based on user impact, not just technical classification. Cross-tester synthesis that identifies patterns across multiple neurodivergent profiles. Reports are structured for regulatory evidence (FCA Consumer Duty, EAA, Equality Act).

Compliance Readiness

UserTesting: Not designed for compliance. You would need to manually interpret session recordings and create your own compliance documentation.

OpenScouter: Every report is compliance-ready out of the box. Findings are mapped to WCAG criteria, severity is scored, and remediation guidance is specific and actionable. Reports can be shared directly with regulators, auditors, or procurement teams as evidence of accessibility testing.

Pricing Model

UserTesting: Enterprise pricing, typically starting at $20,000+ per year for annual contracts. Per-session costs are high, and accessibility-specific testing requires custom panel recruitment at additional cost.

OpenScouter: Per-test pricing with no annual commitment required. Starter, Growth, and Enterprise tiers designed for different testing volumes. Significantly lower cost for accessibility-specific testing because the panel, data capture, and reporting are purpose-built.

When to Use Each Platform

The quick guide: UserTesting answers "is this product easy to use for the average person?" OpenScouter answers "is this product accessible to the 15-20% of users who are neurodivergent, and can I prove it to a regulator?" If you are under FCA Consumer Duty or EAA pressure, only one of those questions matters for compliance.

Use UserTesting When:

- You need general usability feedback from a broad demographic

- You are running rapid concept validation or prototype testing

- Your research question is about user preferences, not accessibility barriers

- You need a large sample size quickly (hundreds of participants)

Use OpenScouter When:

- You need to test digital accessibility with real neurodivergent users

- You need compliance evidence for FCA Consumer Duty, EAA, or Equality Act

- You want to understand cognitive load, anxiety triggers, and attention patterns

- You need structured reports that map to WCAG 2.2 and regulatory frameworks

- You are in financial services, insurance, healthcare, or government

Use Both When:

- You want broad usability data (UserTesting) plus deep accessibility insights (OpenScouter)

- You are running a comprehensive redesign and need both general and accessibility-specific feedback

The Bottom Line

UserTesting and OpenScouter are not direct competitors. They solve different problems. UserTesting tells you whether your product is easy to use for the general population. OpenScouter tells you whether your product is accessible to the 15-20% of users who are neurodivergent, and gives you the compliance evidence to prove it.

If you are under regulatory pressure to demonstrate accessibility (and in financial services, you are), general usability testing is not sufficient. You need testing with users who actually experience the barriers your product may create.

See the difference for yourself. Request a free accessibility audit and compare the depth of insight to what you get from general usability platforms.